What’s covered

- Experiment 1: Route content by type – NMT for structured strings, LLM for dialogue

- Experiment 2: Move a failing locale to a full AI pipeline instead of cutting it

- Experiment 3: Build a custom LQA plugin using AI-assisted code

- Experiment 4: Chunk and tag scripts before sending them to a model

- Experiment 5: Run a blind vendor test to measure AI translation quality

- Where should you start with AI localization experiments?

- Frequently asked questions

Most writing about AI in game localization stays at the level of “it depends” and “results may vary.” This post doesn’t do that.

This article covers five specific, replicable AI localization experiments for game studios and localization programme managers. Each experiment is drawn from a Gridly panel discussion with three senior practitioners working in production game localization: Denis Ivanov (mobile localization lead at Belka Games), Mike Kim (Kong Studios), and Tucker Mills (the Bards Guild). The experiments range from routing strings by content type to running blind vendor quality tests — and each one includes the prerequisites, the process, and what changes when you implement it.

In a hurry? Here’s a quick comparison of all five experiments before we go into detail.

| Experiment | Pipeline maturity needed | Effort | Primary benefit |

|---|---|---|---|

| Route content by type | Early stage | Low | Reduces translator workload on mechanical strings |

| Switch failing locales to AI | Intermediate | Medium | Retains player base in at-risk markets |

| Build an LQA plugin with AI code | Intermediate | Medium | Catches display bugs earlier in production |

| Chunk and tag scripts before AI processing | Advanced | High | Improves tone consistency across large scripts |

| Run a blind vendor quality test | Advanced | Medium | Replaces anecdote with objective quality data |

Experiment 1: Route content by type – NMT for structured strings, LLM for dialogue

Summary: Neural machine translation (NMT) is better suited to repetitive, structured game strings — such as stat modifiers and skill descriptions — while large language models (LLMs) produce stronger results on character dialogue because they can apply persona-level rewriting instructions. Routing game localization content by type improves consistency and reduces cost across both categories.

In game localization, not all content has the same translation requirements. Tucker Mills, a localization practitioner at the Bards Guild, draws a clear line between two categories: repetitive, structured content like stat modifiers and skill descriptions, and narrative content like character dialogue. Each category calls for a different AI tool.

For structured strings, the recommended approach is NMT tools like DeepL rather than large language models. Once you establish a consistent syntax — for example, “every 3 turns, increase [character]’s attack by 5” — an NMT engine handles the remaining hundred variants of that pattern quickly and cheaply. Structured strings also get rebalanced frequently during game development, so keeping human translators focused on them is a poor use of time.

For character dialogue, the approach flips. The recommended workflow is to run a first-pass translation and then prompt an LLM to rewrite it in a specific persona — keeping the meaning intact but adapting the speech patterns for a particular character voice (for example, rewriting with the patterns of an eight-year-old). The result is a more consistent character voice than a human translator working in isolation might produce, and iterations are faster to review.

What does routing game content by type require?

Routing game localization strings by content type works because consistent source text allows AI engines to apply patterns reliably. Without source consistency, rework compounds downstream regardless of which AI engine is used. If source English is fluid and changing, no AI tool maintains quality across translation cycles.

Key prerequisites:

- Standardized, consistent source content established before choosing an AI engine.

- Strings classified by content type in your localization management system.

- A clear decision framework for which locales justify NMT-only versus LLM-assisted workflows.

What changes when you route content by type?

Human translators stop spending time on content that does not require their expertise. Translator effort shifts toward narrative work, linguistic quality assurance (LQA), and context review — the higher-value parts of the localization job — while AI handles the mechanical string layer.

Experiment 2: Move a failing locale to a full AI pipeline instead of cutting it

Summary: In game localization, moving an underperforming language to an AI-only workflow is a cost-effective alternative to dropping it entirely. The practice dramatically reduces the cost of maintaining a locale while keeping the game available to players in that market — replacing a binary cut-or-keep decision with a third option.

Denis Ivanov describes a decision that used to be binary in game localization: if a language failed to hit performance benchmarks or ROI metrics, you cut it. Players in that region simply lost support.

With a mature AI localization pipeline, there is a third option. Instead of dropping the language entirely, the team moves borderline locales to a fully AI-driven workflow. The cost drops significantly, the language stays live, and players do not receive a message telling them their region is no longer supported.

The same logic applies when proposing new languages to management. Denis Ivanov notes that the barrier to adding a new locale has dropped considerably under an AI-first approach — studios no longer need to guarantee immediate ROI to justify the investment in a new language.

What does moving a failing locale to AI require?

Moving an underperforming locale to an AI localization pipeline is not a tactic for teams just starting out with AI. This approach works best only when:

- The language is borderline underperforming rather than critically failing.

- The AI localization pipeline is mature enough to run without active human post-editing on every string.

- Quality from the AI pipeline is acceptable to players in that market, even if it is not at the same level as a fully human-reviewed translation.

What changes when a failing locale moves to an AI pipeline?

Player retention improves in markets that would previously have been cut from localization support. The internal conversation around new language additions also shifts — from “can we afford this?” to “what is our minimum viable AI pipeline for this locale?”

Experiment 3: Build a custom LQA plugin using AI-assisted code

Summary: AI-assisted coding tools allow technically minded localization programme managers to build working LQA tooling in hours rather than waiting months for engineering support. Tucker Mills from the Bards Guild built a functional Unity LQA plugin using Claude Code in a single session, without a formal engineering ticket.

Localization departments are chronically under-resourced when it comes to engineering support. Tucker Mills, localization practitioner at the Bards Guild, found a way around this constraint using Claude Code — an AI-assisted coding tool from Anthropic.

In a few hours, Tucker Mills built a working prototype of an LQA plugin for Unity that automatically tags text overruns and text cutoff issues — the kind of display bugs that typically get caught late in production during manual playtesting. The plugin is designed to flag these issues automatically and upload them as LQA tickets to a project management tool like Jira or Gridly, so problems surface during development rather than at the end of a production cycle.

The broader point from this experiment is that AI-assisted coding tools are giving technically minded localization programme managers the ability to build tooling that would previously have required a formal engineering ticket and months on a sprint backlog. The LQA plugin is one example; the principle extends to any localization tooling gap that a technically minded PM identifies.

What does building a custom LQA plugin with AI require?

Some technical confidence is needed — this is not a zero-code approach. Tucker Mills’ framing is that programme managers who are technically minded can build this kind of tooling without being software engineers. The effort is measured in hours, not in sprints or engineering quarters.

What changes when localization teams build their own LQA tooling?

- LQA issues that previously surfaced late in production are caught earlier in the development cycle.

- Engineering dependency for localization tooling drops significantly.

- Localization teams that previously waited months for internal engineering resources can prototype, test, and iterate on their own solutions.

Experiment 4: Chunk and tag scripts before sending them to a model

Summary: In game localization, sending large scripts to an AI model without structure or metadata produces inconsistent output. Chunking content by character or scene and attaching context metadata before processing improves tone consistency and reduces out-of-character errors — because the language model understands who is speaking and why, not just what the text says.

A common mistake when using AI for game localization at scale is treating the model like a bulk processor — feeding in everything at once and expecting consistent output. Denis Ivanov from Belka Games is direct on this point: do not send all the text into the model at once.

The recommended approach is to divide game localization content into meaningful chunks — by character, scene, or content type — and attach metadata to each chunk before it enters the model. That metadata should tell the model who is speaking, what the content is for, and what constraints apply to the translation.

In practice, a well-structured chunk sent to an LLM might look like this:

Character: Mira (veteran soldier, speaks in clipped, direct sentences, no contractions)

Scene: Post-battle debrief

Tone: Exhausted but composed

Strings: [12 dialogue lines from scene 4]

Task: Translate to French, preserve character voice

Contrast that with the unstructured approach — a single file of 800 mixed strings, no character labels, no scene context, no tone guidance. The model has no way to know that line 43 is spoken by a child NPC and line 44 is a military commander. Both end up sounding the same.

A more granular example: if a character always avoids slang but your source script accidentally includes it, attaching a character sheet as metadata lets the model flag the inconsistency rather than translate it through. Without the metadata, it translates the error faithfully.

Mike Kim from Kong Studios reinforces this with a specific recommendation: source metadata from the game’s design team wherever possible. Character sheets, narrative documentation, and design briefs all provide context that measurably improves AI output quality in game localization. Without that source context, the language model is guessing at intent.

Tucker Mills adds the structural requirement: for chunking and tagging to work reliably at scale, strings must be properly classified and labelled in the localization management system before they go to the model. A team should be able to ask an LLM to review all of a specific character’s dialogue for tone consistency — which requires being able to identify and retrieve those strings in the first place.

What does chunking and tagging game localization content require?

String classification and tagging need to be in place before running game localization content through an AI model. If localization data is unstructured, chunking and tagging will not produce reliable results. The metadata work is often more effort than teams expect, and it is the actual prerequisite — not the choice of AI engine.

What changes when you chunk and tag before AI processing?

- Tone-of-voice consistency improves across large character scripts.

- Out-of-character flags in LQA are reduced.

- Teams have a defensible, repeatable process for explaining how AI output quality is being managed.

Related reading: How Belka Games approaches live service localization at speed — a closer look at how a mobile-first game studio manages continuous content updates with AI in the pipeline.

Experiment 5: Run a blind vendor test to measure AI translation quality

Summary: A blind vendor test — where a third-party evaluator scores batches of AI-translated and human-translated game content without knowing which is which — produces objective, repeatable quality data. This method, developed by Belka Games, replaces internal opinion with comparable numbers that can be tracked over time and used to make the case to leadership.

Denis Ivanov and the localization team at Belka Games developed a methodology for evaluating AI translation quality in game localization without evaluator bias built in. Belka Games sends batches of text — some AI-translated, some human-translated — to a third-party vendor they do not work with regularly. The vendor scores the content without knowing which translation method produced each batch.

The vendor scores game localization content on errors per 1,000 words, weighted by severity rather than raw error count. This produces a number that is comparable across batches and repeatable over time. When Belka Games ran this test against raw, unrefined AI output — no fine-tuning, no custom models, text run through a chat interface without configuration — error rates were high. When the same test ran against a properly trained model with embeddings and fine-tuning in place, the scores matched human translation quality.

The blind vendor test also functions as a management communication tool in game localization. A test with objective scoring is a stronger argument for AI investment than internal anecdote, and it gives leadership a concrete data point to evaluate.

What does running a blind vendor quality test require?

- A polished, properly configured AI model — not an early-stage or untuned implementation.

- Access to a third-party vendor willing to score game localization content without knowing its origin.

- A consistent scoring framework — for example, errors per 1,000 words weighted by severity.

Denis Ivanov is explicit on this point: the gap between “AI used through a chat interface” and “a properly configured model with fine-tuning and embeddings” is significant. Running a blind test on an early-stage implementation will produce poor scores that may undermine internal confidence in AI before the pipeline has been properly developed.

What changes when game studios use blind vendor testing for AI quality?

- Quality assessments are based on objective data rather than internal impressions.

- Studios have a repeatable methodology for ongoing AI quality evaluation as their pipeline develops.

- Conversations with leadership about AI localization investment move from anecdote to evidence.

Where should you start with AI localization experiments?

These five AI localization experiments are not equal in effort or risk. The right starting point depends on pipeline maturity.

If you are early in AI localization adoption:

Start with experiment 1 — routing game content by type. Splitting strings by content type is low-risk, immediately actionable, and does not require a mature pipeline. Investing in source text consistency, the core prerequisite, is worthwhile regardless of which other AI experiments a studio runs next.

Once you have an AI pipeline to evaluate:

Run experiment 5 — the blind vendor quality test. Even a rough blind test produces more useful information than internal opinion about where AI output quality actually stands.

When the localization pipeline foundation is in place:

Experiments 2, 3, and 4 require more infrastructure but offer significant leverage at scale. Experiment 2 is best suited to studios managing underperforming locales. Experiment 3 is best suited to programme managers with technical confidence who are blocked by engineering resource constraints. Experiment 4 is best suited to studios managing large narrative game scripts with multiple distinct character voices.

The common thread across all five experiments is that AI in game localization does not work when treated as a shortcut. It works because structured inputs, proper tooling, and quality measurement create a system that improves over time — the same rigour any other part of a game production pipeline demands.

Frequently asked questions

What is the most important prerequisite for AI localization in games?

Consistent, well-structured source content is the most important prerequisite for AI game localization. Multiple practitioners — including Denis Ivanov at Belka Games and Tucker Mills at the Bards Guild — identified source text quality as the single factor that limits AI performance more than any engine choice. If source English is inconsistent across a game’s strings, no AI localization tool maintains quality downstream.

Is AI translation good enough to replace human translators in games?

AI translation is well-suited to repetitive, structured game content — stat modifiers, UI strings, and templated skill descriptions — but is not a replacement for human translators across all game localization tasks. Human translators remain critical for narrative dialogue, cultural adaptation, and linguistic quality assurance (LQA). The most effective game studios use AI to reduce the volume of mechanical string work reaching human translators, not to eliminate the human review layer.

How do you evaluate AI translation quality in a game localization pipeline?

The most reliable method for evaluating AI translation quality in game localization is a blind vendor test. This involves sending batches of AI-translated and human-translated content to a third-party vendor who scores them without knowing which method produced each batch. Scoring is done on errors per 1,000 words, weighted by severity. Belka Games uses this methodology to produce repeatable, comparable quality data across translation batches and over time.

Can small localization teams build custom AI tooling without engineering support?

Yes — AI-assisted coding tools allow technically minded localization programme managers to prototype working game localization plugins without engineering support. Tucker Mills from the Bards Guild built a working Unity LQA plugin using Claude Code in a single session. The plugin, which would previously have required a formal engineering ticket, automatically tags text overruns and cutoff issues and uploads them as LQA tickets to project management tools.

What is chunking in AI-assisted game localization?

Chunking in AI-assisted game localization means dividing large scripts into smaller units — by character, scene, or content type — before sending them to a language model. Each chunk is tagged with metadata (who is speaking, what the content is for, what constraints apply) so the model processes each unit with full context rather than inferring intent from text alone. Chunking improves tone-of-voice consistency across large character scripts and reduces out-of-character errors in AI-generated translations.

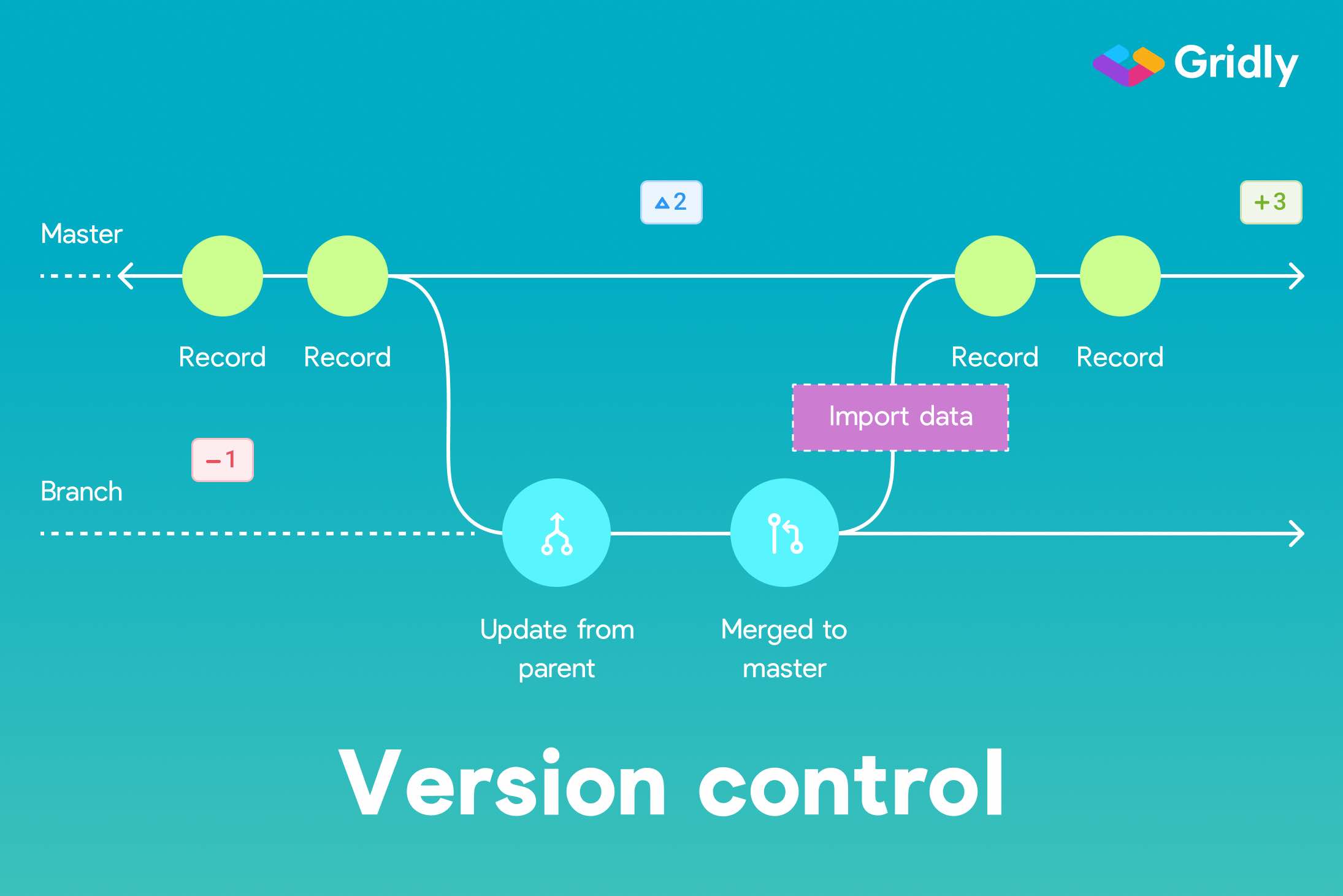

How does Gridly support AI localization workflows for game studios?

Gridly is a localization management platform designed for game studios and digital product teams managing continuous, high-volume content updates. Gridly supports AI-assisted game localization workflows through structured string management, content tagging by type, branching for live service game updates, and integrations with translation engines including DeepL. Game studios use Gridly to route strings to the correct AI or human translation pipeline, manage LQA tickets, and maintain localization consistency across fast-moving production cycles.

The experiments in this post came out of a live panel discussion with Denis Ivanov, Mike Kim, and Tucker Mills. Watch the full session to hear the practitioners walk through each tactic in their own words.

Ready to build an AI localization pipeline that actually works in production? Try Gridly free or book a demo to see how leading game studios are running these workflows today.

Author

Quang Pham

Quang has spent the last 5 years as a UX and technical writer, working across both B2C and B2B applications in global markets. His experience translating complex features into clear, user-friendly content has given him a deep appreciation for how localization impacts product success.

When he's not writing, you'll likely find him watching Arsenal matches or cooking.